People like Hermann Hauser, founder of ARM, and industry big hitters like IBM and Microsoft say machine learning is the next big wave in computing after smartphones.

But machine learning today consumes huge resources and is trapped in warehouses full of hot computers. In 2012, Google needed 16,000 processors burning megawatts of power just to learn to see cats in videos. This inefficiency severely limits machine learning applications in autonomous systems like robots, unmanned vehicles or the billions of sensors the Internet of Things will bring. Even with cheaper graphical processing unit (GPU) implementations, these systems would simply be too big and hot or tethered to a warehouse and power station to be able to learn independently.

So we see a great opportunity in bridging the efficiency gap.

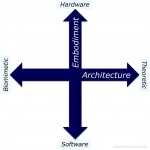

We believe we know how. We are Artificial Learning Ltd, working with top UK universities to develop novel, ultra-efficient silicon chips specifically for machine learning.

We want to make machine learning thousands – perhaps millions – of times more efficient. Instead of needing a warehouse and a power station, our integrated circuit designs will enable powerful machine learning to be embedded in devices that can sit in the palm of your hand and run on batteries.

Learn More